Gesture recognizers are some of my favourite piece of software in iOS. Since its inception, it have steadily gained adoption within the iOS codebase.

If you had dealt with the mess of low level touch handling, you will appreciates the simplicity and composability of gesture recognizers. It’s also well abstracted, with its base class’s (UIGestureRecognizer) updated only once (in iOS 7) out of the 5 major SDK version releases. Mastering it is almost a must for veteran iOS developers.

Its use cases range from tapping, panning, pinching, edge swiping to any custom gestures you can dream of. Combined with animation and physics, it can make your app magical.

But the magic always stop when we try to replicate a button (UIButton) with tap gesture.

Broken Illusion

With tap gesture, you will soon find out that there’s no way you could provide visual feedback to your users when the touch sequence began. To be more specific, you couldn’t highlight your view when user is touching down. This UX crime make your app feels inconsistent and less polish.

This is an unfortunate shortcoming of the tap gesture recognizer. Here’s why…

Tap gesture is a decrete gesture

What is a discrete gesture?

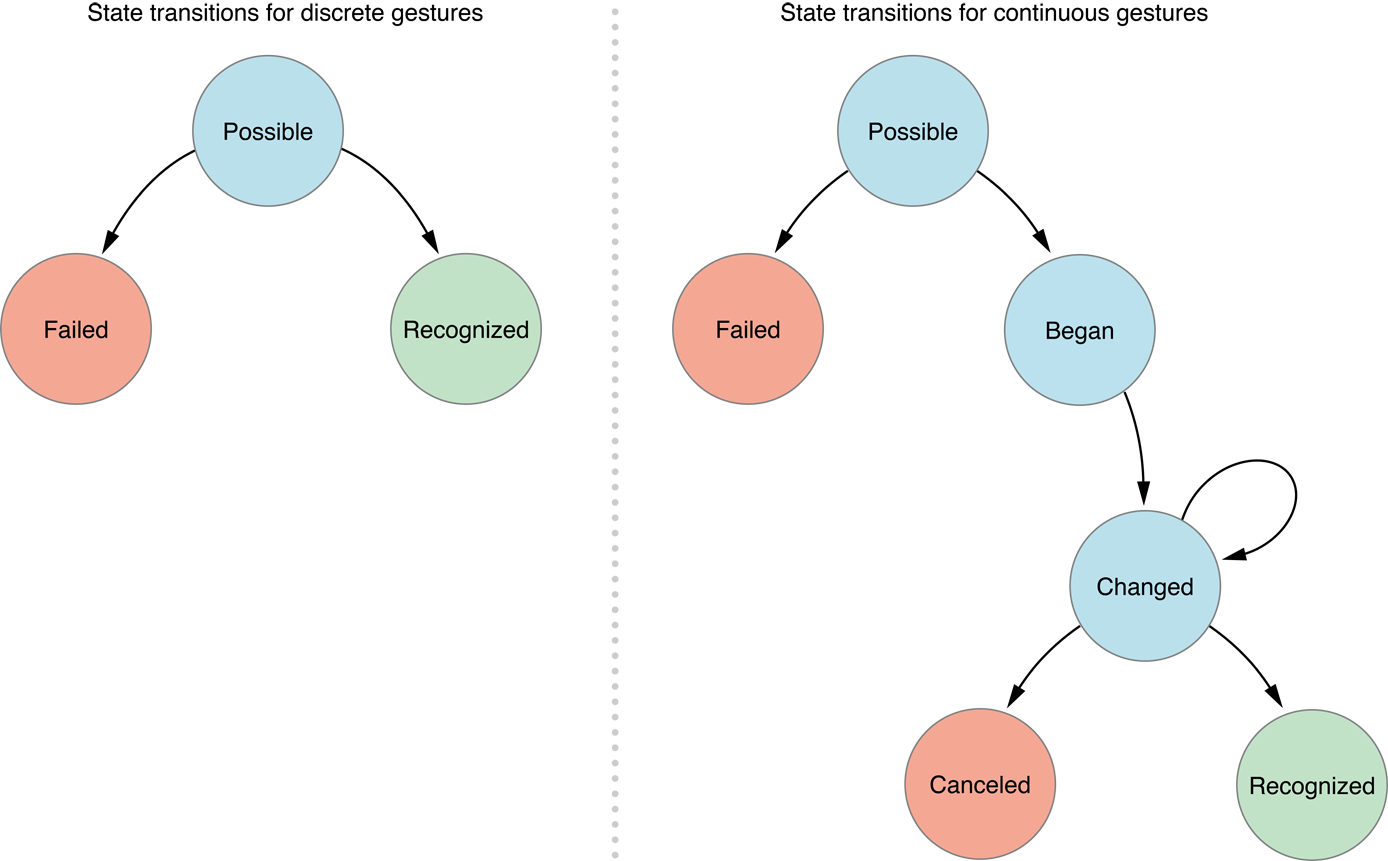

There are 2 kind of gesture recognizers: Decrete and Continouous. They differ mainly in how they transverse between their internal states. Below is an illustration that describes the process:

“State machines for gesture recognizers” from Event Handling Guide for iOS: Gesture Recognizers: Defining How Gesture Recognizers Interact

As you can see, decrete gestures have simpler states than continuous ones. Decrete gestures have no intermediate state, it either happen or fail. So in tap gesture case, you either have tapped or you have not. Tapped is when you have lifted your finger. That’s the only touch event you will receive from tap gesture. It does not emit other (intermediate) touch events, such as touch down.

Button touch events

But button aren’t that simple. Not only does button respond to tap, they also respond to touch down. Heck, button respond to more than just these 2 cases. Just take a look at all the touch events a button can dispatch:

typedef NS_OPTIONS(NSUInteger, UIControlEvents) {

UIControlEventTouchDown = 1 << 0,

UIControlEventTouchDownRepeat = 1 << 1,

UIControlEventTouchDragInside = 1 << 2,

UIControlEventTouchDragOutside = 1 << 3,

UIControlEventTouchDragEnter = 1 << 4,

UIControlEventTouchDragExit = 1 << 5,

UIControlEventTouchUpInside = 1 << 6,

UIControlEventTouchUpOutside = 1 << 7,

UIControlEventTouchCancel = 1 << 8,

// …

};Clearly, tap gesture isn’t able to mimick all these events. While it’s totally capable of providing feedback to the app about the users’ taps, it didn’t gave us opportunities to provide visual feedback during the users’ touch sequences.

long press gesture

So it‘s clear that we need to use continuous gestures but which one can we utilise, or do we have to implement a custom one?

Well, we are in luck, we can repurpose the long press gesture to replicate most of touch handling events supported by button. All we have to do is to reduce the minimum press duration to a value that will make the gesture began almost instantly, removing the long from long press gesture.

longPressGesture.minimumPressDuration = 0.001;Long press gesture is a continuous gesture and thus it will emit began, change, end and cancel gesture events during the touch sequence. We can then easily query and map the gesture’s states to its corresponding touch events. For example, to support highlighting and touch up inside with long press gesture:

- (void)handleLongPressGesture:(UILongPressGestureRecognizer *)longPressGeatureRecognizer {

switch (longPressGestureRecognizer.state) {

case UIGestureRecognizerStateBegan: {

UIView *view = longPressGeatureRecognizer.view;

view.backgroundColor = [UIColor blueColor];

} break;

case UIGestureRecognizerStateEnded: {

UIView *view = longPressGeatureRecognizer.view;

view.backgroundColor = [UIColor whiteColor];

CGPoint location = [touchGestureRecognizer locationInView:view];

BOOL touchInside = CGRectContainsPoint(view.bounds, location);

if (touchInside) {

NSLog(@"Touch Up Inside!");

}

} break;

case UIGestureRecognizerStateCancelled: {

UIView *view = longPressGeatureRecognizer.view;

view.backgroundColor = [UIColor blueColor];

} break;

default: {

} break;

}

}We highlights the view background color to blue when the gesture began and we will set the background color to white when the gesture ended or cancelled. We will also determine if the gesture ended with user touching up from inside the view.

Taking it a step further

Much of the behaviors are well defined by both button and gesture recognizer and there’s a natural mapping between them. Taking advantage of this, I created a gesture recognizer called LXTouchGestureRecognizer. It translates long press gesture state events to touch events, allowing you to make any view response like a button.

The code can be found in this gist or you can scroll down to the bottom where I have embedded the code.

Here’s an example on how to utilize the touch gesture recognizer is as follow:

- (void)viewDidLoad {

[super viewDidLoad];

LXTouchGestureRecognizer *touchGestureRecognizer = [[LXTouchGestureRecognizer alloc] init];

[touchGestureRecognizer addTarget:self action:@selector(handleTouchUpInside:) forControlEvents:UIControlEventTouchUpInside];

[self.customButtonView addGestureRecognizer:touchGestureRecognizer];

}

- (void)handleTouchUpInside:(LXTouchGestureRecognizer *)touchGestureRecognizer {

NSLog(@"Touch Up Inside!");

}Conclusion

I didn’t figure this out on my own. I chance upon this solution while prying into UISwitch implementation. It’s often insightful to dig up Apple’s implementation details, like the one about SVPulsingAnnotationView (recreating MKUserLocationView) written by Sam Vermette which I highly recommends.

Thanks for reading!